High-Availability Orchestration: Bridging Redis and ECS for Scalable Microservices

A deep dive into distributed service communication. Learn how to architect a multi-tiered environment bridging an EC2-hosted Redis node with an ECS cluster, utilizing private service discovery, dual Application Load Balancers, and hardened IAM security.

In a distributed microservices architecture, the challenge isn't just running containers—it is orchestrating the communication between them across isolated network stacks. This guide breaks down the architecture of a high-availability geolocation system, bridging a persistent Redis data node with a scalable Amazon ECS cluster.

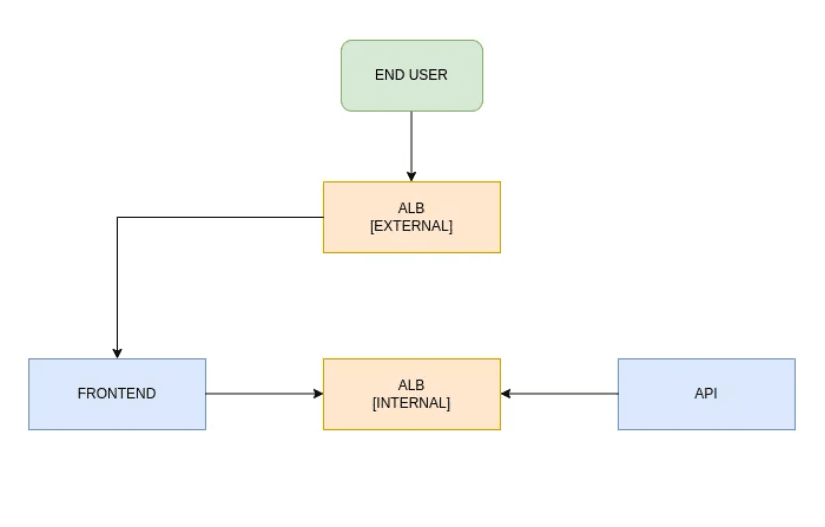

By leveraging a dual-load-balancer strategy (Internal vs. External) and Route 53 private service discovery, we create a resilient pipeline capable of handling both internal state and public traffic.

Phase 1: The Persistence Layer (Redis on Host Network)

To ensure maximum throughput and minimal latency, the Redis node is deployed directly on the host network. This bypasses the overhead of the Docker bridge for high-speed data access.

Phase 2: Private Service Discovery

We establish a Route 53 Private Hosted Zone linked to our default VPC. This creates a "Logical DNS" layer, mapping the EC2 instance's private IP to a human-readable internal domain: redis.nidhin.local. This decoupling allows our ECS tasks to find the data node without needing hardcoded IP addresses.

Phase 3: The ECS Cluster Architecture

The compute layer is built on an ECS Cluster utilizing the EC2 Launch Type. This provides full control over the underlying instances while offloading container health and lifecycle management to the AWS control plane.

- IAM Security & Task Roles We utilize the Principle of Least Privilege by creating a specialized RedisTaskRole.

Role: RedisTaskRole (Use Case: Elastic Container Service Task) Policy: SecretsManagerReadWrite (Enables secure retrieval of API keys and database credentials). 2. Task Definition & Environment Injection The API service is defined with a task size of 2 for redundancy. We use the Bridge Network Mode for container isolation with random port mapping (0 host : 8080 container).

Critical Environment Variables:

REDIS_HOST / REDIS_PORT: Orchestration endpoints.

API_KEY_FROM_SECRETSMANAGER: Decoupled credentials.

SECRET_NAME / SECRET_KEY: Metadata for the IAM handshake.

Phase 4: The Internal Load Balancer (Service-to-Service)

To facilitate secure communication between the frontend and the API, we deploy an Internal Application Load Balancer (ALB) named ipgeolocation-srv-discovery.

Topology: Internal ALB across AZs ap-south-1a and ap-south-1b. Discovery Record: We map the internal ALB DNS to api.nidhin.local within Route 53, creating a stable entry point for the frontend services.

Phase 5: Executing the API Service

With the networking logic in place, we initialize the api-geolocation-service.

Redundancy: 2 Tasks (distributed across EC2 instances). Connectivity: Integrated with the internal ALB on port 80. Verification: Executing a curl from a cluster instance to the Internal ALB DNS confirms that the request is successfully traversing the VPC to the backend task.

Phase 6: The Frontend Gateway (Public Access)

Finally, we architect the entry point for end-users. This requires a dedicated Frontend Task Definition and a Public-Facing ALB.

- Frontend Task Setup Config: Networking in bridge mode with dynamic host port mapping. Env Vars: Pre-programmed to point to the internal api.nidhin.local endpoint.

- External Load Balancer & SSL To ensure end-to-end security, the external ALB is configured with:

HTTPS Listener (443): Encrypted end-user communication. HTTPS Redirection: Port 80 traffic is automatically forced to 443. Target Group Management: Routes external traffic to the dynamically assigned host ports of the frontend tasks.

Phase 7: Global Verification

The architecture is complete once the External ALB DNS is mapped to a public Route 53 record (e.g., frontend.nidhin.com).

Handshake: Users hit the External ALB via HTTPS. Frontend Logic: The frontend task queries the Internal ALB (api.nidhin.local). Data Retrieval: The API service fetches state from the Redis host node. Security: All API keys are retrieved on-the-fly from Secrets Manager via the Task Role.

Conclusion

This multi-tiered architecture ensures that our application has no single point of failure. By separating internal service discovery from public traffic and hardening the identity of every container, we achieve a production-ready environment that is both secure and infinitely scalable.

Happy Shipping! 🚀🛰️

Fuel the Architecture

If this deep dive helped you build something better, consider fueling my next late-night coding session.

Newsletter Updates

Join 1,000+ engineers receiving weekly insights into AI, cloud architecture, and technical guides.