The Orchestrated Handshake Between Emulator, Shell, and Kernel

The terminal is not a single program, but a multi-layered orchestration of specialized processes. Explore the architectural relationship between the Terminal Emulator (the display), the Shell (the interpreter), and the Kernel unraveling the process lifecycle from initialization to execution

In modern computing, the "Terminal" is often perceived as a monolithic black window. In reality, it is a sophisticated, multi-layered stack designed to bridge human intent with machine execution. To understand how a command is truly executed, one must unravel the modular relationship between the Terminal Emulator, the Shell, and the OS Kernel.

Each layer has a distinct responsibility, communicating through standardized streams of data to create the Command Line Interface (CLI) we interact with every day.

Phase 1: The Display Layer (Terminal Emulator)

The interface you interact with—apps like iTerm2, Windows Terminal, or Alacritty—is technically a Terminal Emulator. Its primary role is to provide a graphical bridge between the user's keyboard/monitor and the text-based world of the operating system.

Initialization Workflow:

- Graphical Instantiation: The emulator launches as a standard GUI process, requesting a window from the OS window manager.

- Environment Setup: It initializes display buffers, font rendering, and local configurations (colors, transparency, etc.).

- The PTY Handshake: The emulator creates a Pseudo-terminal (PTY)—a software-defined pair that looks like a hardware device—to facilitate communication with the background shell.

Phase 2: The Logic Layer (The Shell)

Once the terminal emulator is active, it spawns a child process: the Shell (e.g., Bash, Zsh, or Fish). While the emulator handles how things look, the shell handles what they mean.

The Interpreter Lifecycle:

- The Standby State: The shell sits in an infinite loop, waiting for data on its Standard Input (stdin).

- The Handshake: When you type "ENTER," the emulator sends that character stream through the PTY to the shell.

- Command Parsing: The shell receives the stream, parses it into tokens, and searches the system

$PATHfor the corresponding binary (e.g.,lsorpython).

Phase 3: Command Execution & Process Forking

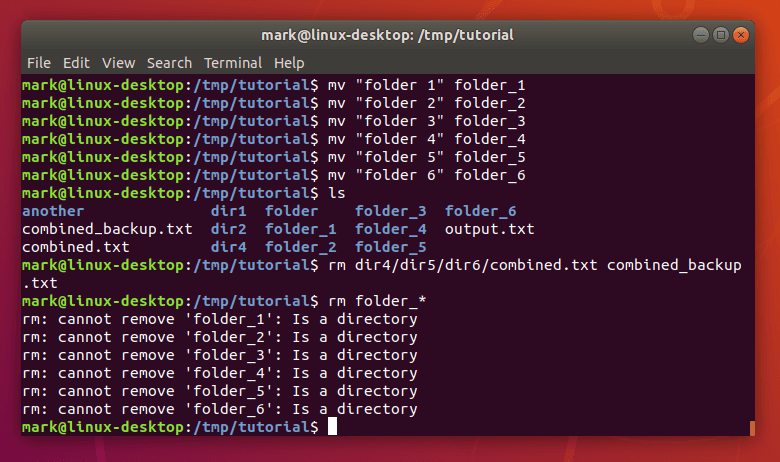

When the shell identifies a valid command, it doesn't execute it directly within its own process. Instead, it interacts with the Kernel to create a new, dedicated environment.

- Forking: The shell uses the

fork()system call to create a perfect clone of itself. - Execution: The clone uses

exec()to replace its logic with the logic of the requested program (e.g., the code forls). - Telemetry: As the program runs, it generates a stream of data known as Standard Output (stdout). The shell captures this stream and relays it back through the PTY to the terminal emulator, which finally renders it as text on your screen.

Phase 4: Kernel Involvement & Resource Management

The Kernel is the master orchestrator in this lifecycle. It acts as the traffic controller, ensuring that:

- Keystrokes from the physical hardware reach the correct terminal emulator.

- CPU Time and Memory are allocated to the shell and any child processes it spawns.

- Isolation is maintained so that a crash in the terminal emulator doesn't crash the kernel or the background shell.

Phase 5: The Termination Signal (Exiting)

Closing a terminal is as much of a process as opening one.

- The Exit Signal: When you type

exitor pressCtrl+D, you send an EOF (End of File) or a specific termination string to the shell. - Process Teardown: The shell interprets this as a command to shut down. It cleans up any remaining background jobs and exits its own process.

- Emulator Response: The terminal emulator, detecting that its child process (the shell) has died, receives a signal to close its graphical window, returning control to the desktop manager.

Conclusion

The terminal is a masterpiece of modular design. By decoupling the display layer (Emulator) from the interpretation layer (Shell), Unix-like systems created a paradigm where you can swap interfaces or interpreters without ever breaking the underlying logic of the computer. Understanding this stack allows engineers to better troubleshoot environment issues, manage background processes, and architect efficient automation pipelines.

Happy Orchestrating! 🚀🛰️

Fuel the Architecture

If this deep dive helped you build something better, consider fueling my next late-night coding session.

Newsletter Updates

Join 1,000+ engineers receiving weekly insights into AI, cloud architecture, and technical guides.