Architectural Slimming: Precision Engineering with Docker Multistage Builds

In production, every megabyte of an image is a liability for both security and deployment velocity. Discover how to leverage Multistage Builds to purge build-time dependencies, reducing image footprints by over 95% while hardening the final runtime environment.

In the world of container orchestration, image size is a direct metric of engineering efficiency. Large, bloated images are not just a strain on network bandwidth; they are a security bottleneck. Every compiler, source header, and build-time library that leaks into your production image increases the Attack Surface of your container.

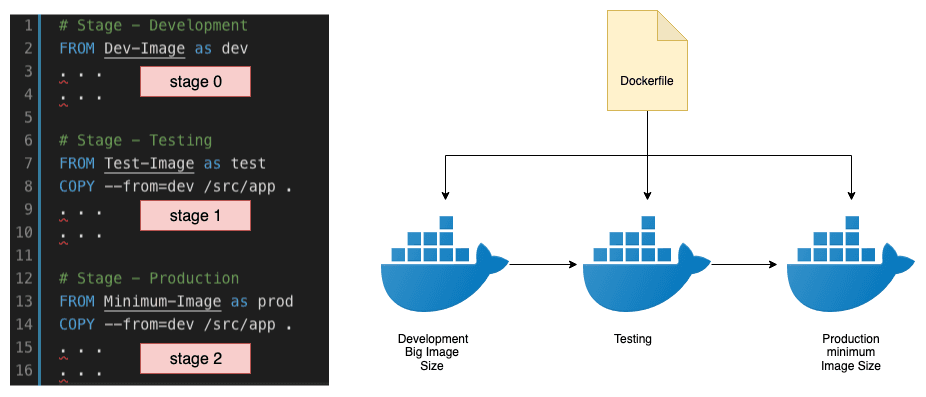

Multistage Builds are the architectural solution to this problem. They allow you to use multiple FROM statements in a single Dockerfile, enabling a clean separation between the Compilation Environment and the Production Runtime.

The Naive Approach: The Single-Stage Bottleneck

Consider a standard C application. A traditional Dockerfile must install a compiler (GCC) and headers (musl-dev) to turn source code into an executable.

The Result: The final image is approximately 156 MB. It contains the GCC compiler, object files, and development headers none of which are required to run the final binary. This "leaked" overhead creates a heavy, insecure production node.

The Precision Approach: Multistage Orchestration By leveraging multistage builds, we can treat the compilation phase as a "black box" that exists only during the build process. We then extract only the final, compiled binary and inject it into a pristine, lean runtime image.

Technical Analysis of the Handshake:

Stage Persistence: The AS compile tag marks the first stage. Once this stage completes, Docker caches the result but prepares to discard the entire environment.

State Extraction: In the second stage, we use COPY --from=compile. This instruction explicitly reaches into the temporary build container to retrieve the mypgm binary.

Purging the Environment: The final image is built solely on the instructions of the second stage. Since we never installed GCC or musk-dev in this stage, they are entirely absent from the final layers.

The Payoff: Performance and Security Metrics

The difference in output is profound and represents a gold standard in image optimization:

Naive Image Size: ~156 MB

Optimized Image Size: ~7.35 MB

Footprint Reduction: 95.3%

Beyond the Megabytes:

-

Hardened Security: By removing the C compiler and dev tools, you eliminate entire categories of potential exploits. Even if an attacker gains shell access, they cannot compile new malicious binaries on the fly.

-

Deployment Velocity: A 7MB image can be pulled from a registry and initialized in milliseconds, significantly reducing the "Cold Start" time in serverless or auto-scaling environments.

-

Declarative Maintenance: The Dockerfile becomes a self-documenting pipeline. Anyone reading the file can immediately see where the build happens and how the runtime is isolated.

Conclusion

Multistage builds are not just a trick for saving space; they are a fundamental best practice for production infrastructure. By decoupling the build-time requirements from the runtime needs, you create containers that are faster, leaner, and exponentially more secure.

Fuel the Architecture

If this deep dive helped you build something better, consider fueling my next late-night coding session.

Newsletter Updates

Join 1,000+ engineers receiving weekly insights into AI, cloud architecture, and technical guides.