Performance Engineering: Mastering In-Memory Caching with Redis and Memcached

Latency is the enemy of modern applications. Dive into the architectural mechanics of in-memory caching exploring the transition from disk-bound bottlenecks to RAM-speed retrieval, and how to implement resilient caching patterns using Redis and Memcached

In the architecture of high-scale digital systems, the primary bottleneck is almost always I/O Latency. Traditional relational databases like MySQL or PostgreSQL are optimized for durability and complex relationships, but because they reside on physical disks (SSD/HDD), their retrieval speeds are bound by hardware physics.

In-Memory Caching is the architectural solution to this bottleneck. By utilizing RAM (Random Access Memory) as a "Tier-1" data node, we can achieve sub-millisecond response times, shielding our primary databases from redundant, high-frequency query loads.

The Architectural Pattern: Lazy Loading (Cache-Aside)

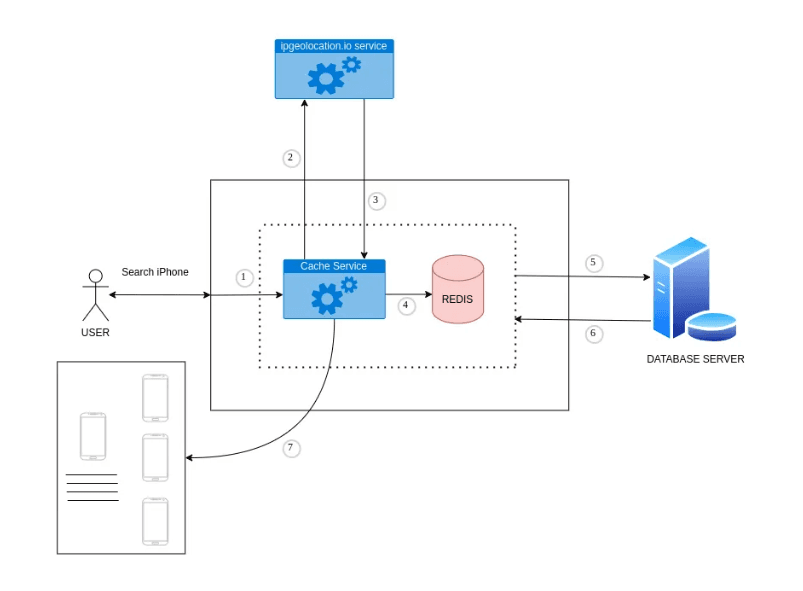

Most caching implementations follow the Cache-Aside strategy. In this pattern, the application treats the cache as the primary target for data requests, only falling back to the main database when necessary.

The Lifecycle of a Request:

- Search Phase: A client requests data (e.g., "iPhone Specs").

- Cache Interrogation: The application checks the In-Memory Cache (Redis/Memcached) for the specific key.

- The Cache Hit: If the data is found, it is returned instantly. The request never touches the main database.

- The Cache Miss: If the data is absent, the application performs a "Warm-up" fetch from the main database, delivers it to the client, and simultaneously stores it in the cache for the next request.

Real-World Deep Dive: IP-Geolocation Caching

To understand the impact of this architecture, consider an IP Geolocation service. Retrieving a user's location based on their IP involves an external API handshake which is slow and often metered (costly).

By introducing a caching layer:

- The Fist Handshake: The user's IP is resolved via a 3rd party (e.g., ipgeolocation.io). This result is cached in Redis with a specific TTL (Time to Live).

- Subsequent Handshakes: Any future requests for that same IP are served directly from the local RAM.

- Result: Internal latency drops from ~200ms (API call) to <1ms (Redis lookup), while simultaneously reducing external API costs.

The In-Memory Paradox: Speed vs. Volatility

The power of tools like Redis and Memcache comes from their storage medium: RAM.

| Feature | Caching Layer (Redis/Memcache) | Database Layer (MySQL/PostgreSQL) |

|---|---|---|

| Storage Medium | Volatile RAM | Persistent Disk (SSD/HDD) |

| Retrieval Speed | Sub-millisecond | Millisecond+ |

| Durability | Ephemeral (Lost on power failure) | Durable (Acid Compliant) |

| Logic Type | Simple Key-Value / Data Structures | Complex Relational Querying |

Because RAM is volatile, we treat the cache as a Performance Accelerator, not a Source of Truth. Your primary, persistent database remains the final authority on data, while the cache serves as a high-speed replica of frequently accessed segments.

Redis vs. Memcached: Choosing the Right Engine

While both are industry standards, their architectures serve different use cases:

- Memcached: Highly optimized for simple key-value pairs. It is multithreaded and designed for simplicity and massive horizontal scaling where data structure complexity is low.

- Redis: More than a cache; it is an In-Memory Data Structure Store. It supports strings, hashes, lists, sets, and sorted sets. It also offers advanced features like Pub/Sub, Lua scripting, and elective persistence (RDB/AOF).

Conclusion

Caching is not just an optimization; it is a fundamental requirement for modern performance engineering. By moving frequently accessed data from the high-latency disk layer to the low-latency RAM layer, you significantly reduce response times and system overhead. Whether you are building a simple website or a complex microservices mesh, a disciplined caching strategy is the key to building truly responsive applications.

Happy Engineering! 🚀🛡️

Fuel the Architecture

If this deep dive helped you build something better, consider fueling my next late-night coding session.

Newsletter Updates

Join 1,000+ engineers receiving weekly insights into AI, cloud architecture, and technical guides.