High-Availability Load Balancing with NGINX and Flask Slug

Horizontal scaling is the bedrock of production web systems. Explore the architectural workflow of deploying a resilient Flask cluster behind an NGINX load balancer covering multi-node containerization, isolated bridge networking, and the configuration of upstream traffic groups.

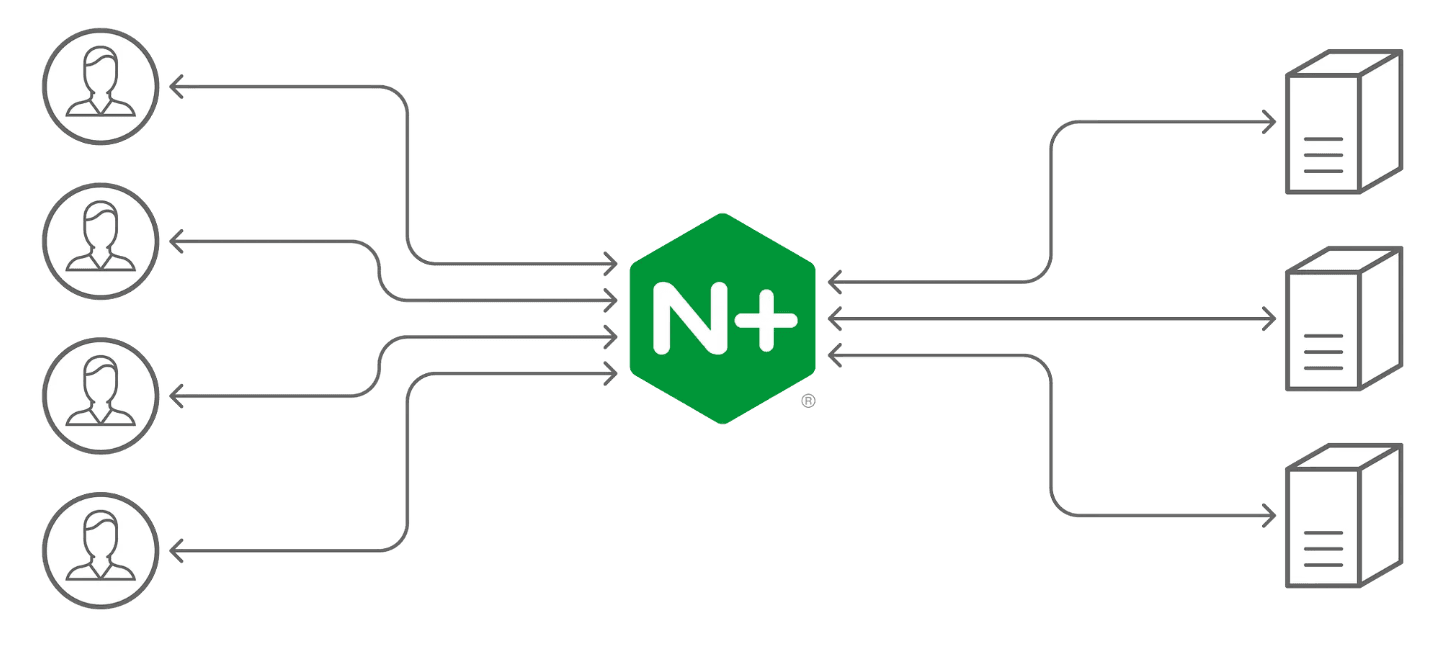

In a production web environment, relying on a single application instance creates a critical point of failure. To build for scale and resilience, we must adopt the Horizontal Scaling Pattern: running multiple redundant copies of an application and distributing traffic across them.

NGINX serves as the orchestrator in this architecture, acting as a high-performance load balancer that translates public traffic into an optimized internal workload.

Phase 1: Hardened Containerization (The Flask Node)

Our application is built using the Python Flask framework. To ensure a production-grade container, we use a non-root security model and a lightweight Alpine Linux base.

The Blueprint (Dockerfile)

The Logic Node (app.py) A simple endpoint that returns the application version, allowing us to visualize the load-balancing rotation:

Phase 2: Isolated Fabric (Networking)

To allow the containers to communicate via their hostnames, we establish a dedicated Bridge Network. This provides a secure, internal-only communication channel that is invisible to the public internet.

Phase 3: Cluster Instantiation

We deploy three distinct replicas of our application. By connecting them to the same network, we lay the foundation for the NGINX upstream handshake.

Phase 4: The Load Balancing Architecture (NGINX)

The NGINX configuration acts as the brain of the cluster. We use an upstream block to define our cluster members and a proxy_pass directive to route traffic.

The Blueprint (default.conf)

aunching the Gateway Node Finally, we initialize the NGINX container, binding port 80 to the host and mounting our custom configuration.

Phase 5: Verification and Resilience

Why this scales:

Fault Tolerance: If app1 crashes, NGINX will automatically detect the failure and redirect all traffic to app2 and app3. Resource Optimization: Each container is isolated, allowing you to limit CPU/Memory usage per node to prevent "noisy neighbor" issues. Zero-Downtime Updates: You can update a container's image version and reload NGINX without ever dropping a connection for your end-users.

Conclusion

Horizontal scaling with NGINX is an essential skill for building modern, resilient web services. By decoupling your application logic from your traffic delivery layer, you achieve the stability and performance required for enterprise-grade deployments.

Fuel the Architecture

If this deep dive helped you build something better, consider fueling my next late-night coding session.

Newsletter Updates

Join 1,000+ engineers receiving weekly insights into AI, cloud architecture, and technical guides.